Ensuring that your multi-device eLearning runs consistently and smoothly across the identified range of devices, screen sizes, browsers, and platforms is crucial to deliver an engaging and consistent learning experience for your users.

But the sheer number of device-OS-browser combinations and the set of test parameters which need to be checked for EACH combination make the testing process complex, to say the least.

Based on our experience in designing, developing, and testing different types of eLearning – including Flash-based projects, courses created using Rapid Authoring Tools, and HTML-based projects for multi-device learning – I have listed five key considerations that can help you in testing your multi-device/ responsive projects.

-

- Define the Testing Target Environment and Approach

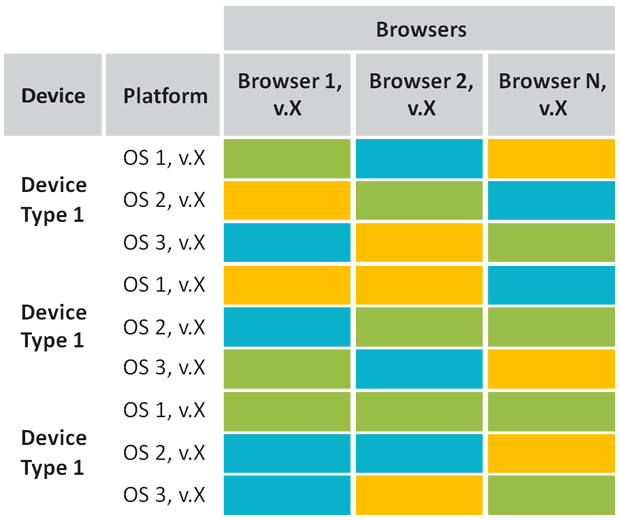

For a precise, limited specification, i.e. a very specific and narrow target range, defining a testing environment would be relatively easy. It might look as simple as this –

However, for a wider target range, an optimization approach would be helpful. This involves analyzing popularity, usage, and sales statistics for devices, browsers, and OSs to narrow down to a comprehensive, representative set.

-

- Create a Testing Matrix

Once an optimal set of devices, OSs, and browsers are identified, manually work out all the permutations and combinations to create a testing matrix. The lowest specifications will form the parameters for minimum edge testing.

Tip: Your test cases will need to be refined and extended to make sure that different devices are covered. For example, for the scenario page shown below, the test case for desktops would define clicking the next/back arrow buttons as well as the numbered buttons for moving from one to scene to the other. For the same scenario page on a tablet, the test case may additionally define horizontal swiping to move between scenes. Finally, for smartphones, the scenario presentation has changed to a non-interactive, more-text-based view that is better suited to smaller displays. The test case therefore needs to define only vertical swiping to scroll through the scenes.

-

- Check the Availability of Physical Devices

The next point of consideration would be the availability of actual devices. Check if all the devices listed in the testing matrix are ‘physically’ available, and if required, evaluate if you can use tools/simulators to cover the missing ones.

Tip: Use actual devices and set ups for testing, as far as possible, for more accurate results, rather than relying on tools and simulators.

-

- Test, Test, and Re-Test

As far as the testing process goes, it may be more efficient to first test on one desktop, one tablet in portrait view, and the smallest smartphone in portrait view. This will allow you to validate content for all breakpoints as a first major stage of testing. It is advisable to perform this testing 100% manually on the initial three representative devices, one device at a time.

Tip 1: Wait till the initial issues are fixed and re-verified before expanding to test on other combinations.

Tip 2: When testing the other combinations, it can be more effective to go device-type-wise – for example, first test on all tablets, covering all applicable browsers and OSs in succession on each tablet; then move on to smartphones.

- Issue Logging

Log the issues in an efficient manner, so as to make grouping easier and reduce duplication.

Tip: Make smart use of keywords while logging issues (e.g.device/brand/OS/version/browser), either as a separate field or within the issue description.

As technology keeps evolving at breakneck speed, particularly with regards to mobiles and tablets, eLearning developers and testers like us will keep encountering new challenges and will need to find innovative solutions. I hope these key considerations come in handy and help you to make your testing process more effective.