In one insurance firm, three regions operated with the same learning setup. The same LMS. The same custom eLearning modules. The same role-based journeys. Reporting looked consistent. Completion rates were steady across all three.

But the performance did not follow the same pattern.

One region processed claims faster. Another showed repeated rework. The third depended heavily on escalation teams. Nothing in the learning data explained the difference. The system looked aligned, but the outcomes were not.

At first, the response stayed within learning. More courses were added. Some content was refreshing. The coverage improved. The system expanded, but the performance gap remained.

Over time, the question started to shift. Less about what learning exists. More about what people are actually able to do when work happens. That shift does not sit inside learning alone. It begins to connect with how the business operates.

Why Capability Gaps Do Not Show Up in Learning Sata

Most organizations have a clear picture of their learning structure. Courses are mapped to roles. Completion is tracked. Custom eLearning fills known gaps. This creates a sense of coverage.

But coverage does not always translate into capability.

A training gap is usually visible. A new tool comes in, and there is no training. Policy changes, and people need an update. The response is clear. Build or source content.

Capability gaps behave differently. They tend to appear in ways that are harder to isolate.

- The same process takes longer in one team, even when training is identical

- Errors repeat at specific steps, not across the entire workflow

- Employees complete programs but still depend on support for routine decisions

- Performance varies even when learning inputs remain stable

- Managers request more training, but the requests do not point to a clear gap

- Teams follow procedures but struggle when conditions change slightly

In one case, a company compared audit data with LMS records. Completion rates were high across teams. Still, certain errors kept appearing in similar situations. The issue was not missing training. It was how decisions were made under real conditions.

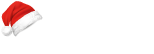

This is where the diagnostic approach starts to shift. Data begins to come from operational systems, not just learning platforms. Roles are broken into smaller capability areas. Learning becomes one part of a larger system.

Once capability is defined this way, it does not stay within L&D. It begins to connect with other functions that influence how work actually gets done.

How Cross-Functional Alignment Starts Shaping the System

When capability is tied to real work, different parts of the organization begin to see the same problem from different angles. L&D cannot define it alone.

HR focuses on roles and progression. Operations look at execution. Risk identifies exposure. IT manages the systems where work happens. Each of these functions works with its own data and priorities.

In one organization, capability design started within HR. The framework looked structured, but performance gaps remained. When operations data was added, patterns became clearer. Risk then highlighted areas where those gaps carried compliance impact. IT had to adjust workflows to reflect updated expectations.

This did not happen in one step. It evolved over time.

As more functions became involved, the system started to move away from isolated learning initiatives. It began to take the shape of something broader. A structure that connects roles, workflows, systems, and outcomes.

That shift creates a need for consistency. Capability needs to be defined in a way that holds across the organization.

Designing Capability Frameworks That Reflect Real Work

Frameworks often begin with structure. Skills are defined. Levels are assigned. Roles are mapped. This creates clarity at a high level, which is useful at the start.

The difficulty appears when frameworks meet real work conditions.

Some frameworks remain too broad. Others do not adjust to variations in how work is done across regions or teams. Over time, they begin to drift away from actual use.

In one case, a framework defined capabilities correctly on paper but did not connect to the systems where work happened. Employees understood expectations but struggled to apply them during real tasks.

Where Frameworks Begin to Lose Alignment

- They stay fixed while processes continue to change

- They describe roles but miss task-level differences

- They do not connect with workflow systems

- They focus on coverage rather than application

- They are updated only after gaps become visible

Frameworks start to hold value when they stay close to how work actually unfolds. Not how it is documented.

Once frameworks begin to influence work, another layer becomes necessary. Ownership needs to be defined. Someone has to maintain and adjust them as the business evolves.

Governance models that define ownership and continuity

As capability frameworks begin to shape real work, the question of ownership becomes unavoidable. Without it, updates slow down and alignment weakens over time.

Governance, in this context, is not about control. It is about clarity across functions.

Different parts of the system require defined ownership. Not just for design, but for ongoing updates and validation. This often leads to a layered model rather than a centralized one.

In one organization, governance started within L&D. Over time, it expanded. HR retained strategic ownership. Operations validated capability relevance. Risk ensures compliance alignment. IT handled system integration.

The structure did not simplify the system. It made responsibilities clearer.

Once governance is in place, attention moves to how systems support it. Technology becomes a key factor in whether the model holds or breaks.

Aligning Digital Learning Solutions with Enterprise Systems

Most organizations already have multiple learning tools in place. LMS platforms, content libraries, custom eLearning, reporting systems. Each addresses a specific need.

The issue is not availability. It is how these systems connect.

When learning is treated as architecture, technology needs to align with how work happens and how performance is tracked.

Where Integration Gaps Tend to Appear

- Learning data remains within the LMS and does not connect with business systems

- Capability tracking is separate from performance tracking

- Reporting focuses on activity but does not reflect outcomes

- Workflow systems operate independently from learning inputs

- Data does not move easily between platforms

- Insights remain fragmented across functions

In one case, a company had a strong set of digital learning solutions and a large custom eLearning library. Still, learning data did not connect with operational systems. The completion looked consistent. Performance varies.

Some organizations build integration layers to address this. Others adjust workflows, so capability signals appear within systems used for daily work.

Without this alignment, enterprise digital learning remains disconnected, even when it is well developed.

As systems begin to connect, measurement starts to change as well.

Measurement And Sustainability in Enterprise Learning Systems

Measurement often begins with activity. Completions, participation, assessment scores. These are easy to track and report.

They do not always reflect capability.

As systems evolve, measurement starts to include performance signals. Process efficiency. Error reduction. Decision quality under real conditions.

In one organization, learning data was compared with service metrics. Completion rates stayed stable. Performance improved only in areas where workflow changes supported learning updates.

This made measurement less clean, but more meaningful.

Sustainability then becomes part of the system. Capability frameworks require regular updates. Data inputs need to stay current. Cross-functional alignment has to continue beyond initial rollout.

Systems that treat learning as architecture tend to adjust over time. Others expand learning without seeing the same level of impact.

How Upside Learning Supports Capability Architecture

Upside Learning works at the point where learning connects with business systems. Not limited to content development but focused on how capability is structured and sustained.

During diagnostics, the focus moves beyond course mapping. Capability gaps are identified using operational data and workflow patterns. This allows a clearer view of where performance breaks down.

Framework design stays close to real work. Capability structures reflect how roles operate in practice, not just how they are defined.

On the technology side, custom eLearning and digital learning solutions are aligned with enterprise systems. Learning connects workflows, platforms, and reporting layers.

Governance and measurement are built into the system. Ownership is defined across functions. Outcomes are tracked using both learning and performance data.

This supports a broader learning transformation strategy . One that moves toward organizational learning transformation, where capability is treated as a system across the enterprise.

Over time, patterns become visible. Systems built this way tend to show more consistent capability of movement. Others continue to expand their learning without seeing the same shift.

In most cases, the difference is not in how much learning is available, but in how closely it connects to how work actually gets done. That connection takes structure, not just content.

Design that structure with intent, and the system starts to behave differently.

Contact Upside Learning to design and implement a capability-driven learning architecture that connects learning directly with business performance.

FAQs

Manufacturing workforce training focuses on building the skills needed to run modern production systems. It usually involves structured training programs. These programs help employees operate equipment and maintain machinery. They also cover quality checks and safety procedures.

Training helps employees build the skills needed for modern manufacturing technologies. It focuses on what the job actually demands. It also gives organizations a way to grow talent from within. This reduces the need to rely only on external hiring.

Many manufacturers use a combination of classroom instruction, digital learning modules, simulation training tools, and on-the-job training. Blended learning approaches often deliver the strongest results.

You can usually see early changes within a few months. This is more likely when manufacturing training focuses on high-impact areas like maintenance, diagnostics, and equipment setup.

Common indicators include improved OEE, reduced MTTR, lower scrap rates, fewer safety incidents, and faster employee time to competency.

Upside Learning works on digital learning programs for complex industries, including manufacturing. The team builds role-based training that supports workforce capability and day-to-day operations.